What 1,565 Research Papers Teach Us

The artificial intelligence revolution isn't just about building better models—it's about learning how to talk to them. As large language models like GPT-4 and Claude become more powerful, the art and science of "prompt engineering" has emerged as one of the most critical skills in AI. But with hundreds of techniques scattered across academic papers, where do you even start?

A groundbreaking new research paper has done the heavy lifting for us. Researchers conducted the most comprehensive review of prompt engineering to date, analyzing 1,565 research papers to create the first complete taxonomy of prompting techniques. Their findings reveal not just what works, but why—and more importantly, how you can apply these insights immediately.

The State of Prompt Engineering: Chaos and Opportunity

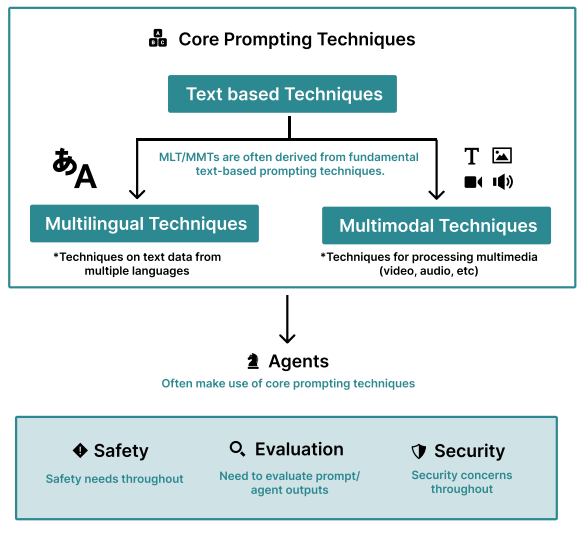

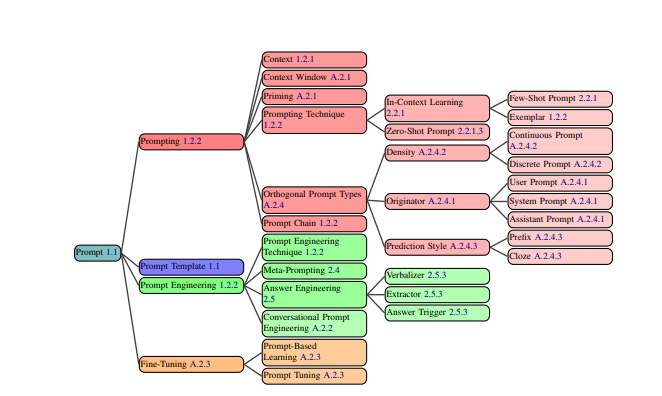

Here's the reality: prompt engineering is simultaneously one of the most important and most chaotic fields in AI right now. The researchers identified 58 distinct text-based prompting techniques, with new methods emerging monthly. Yet there's been no standardized vocabulary or systematic approach—until now.

"The use of prompts continues to be poorly understood, with only a fraction of existing terminologies and techniques being well-known among practitioners"

This fragmentation means most people are only scratching the surface of what's possible.

The Big Picture: What Actually Works

When the researchers analyzed citation patterns across thousands of papers, a clear hierarchy emerged. The techniques that dominate both research and practice are:

- Few-Shot Learning - Showing the AI examples of what you want

- Chain-of-Thought (CoT) - Getting the AI to "think out loud"

- Zero-Shot prompting - Direct instructions without examples

- Self-Consistency - Running multiple attempts and taking the majority answer

These aren't just academic favorites—they're the workhorses of practical prompt engineering.

The Six Categories That Rule Them All

The research reveals that all prompting techniques fall into these major categories:

In-Context Learning: Teaching Through Examples

This is your bread and butter. Instead of training a new model, you teach it within the conversation itself. The key insight? Quality trumps quantity. The researchers found that exemplar selection, ordering, and formatting can cause accuracy to vary from "sub-50% to 90%+" on the same task.

Pro tip: Use semantically similar examples to your actual use case, maintain consistent formatting, and aim for balanced label distribution in your examples.

Thought Generation: Making AI Reasoning Visible

The breakthrough here is Chain-of-Thought prompting—simply adding "Let's think step by step" can dramatically improve performance on complex reasoning tasks. But the researchers discovered variations that work even better:

- Step-Back Prompting: First ask a high-level conceptual question

- Tree-of-Thought: Generate multiple reasoning paths and evaluate them

- Program-of-Thoughts: Generate code as reasoning steps

Decomposition: Breaking Down Complex Problems

When facing a complex task, break it into smaller pieces. Techniques like "Least-to-Most" prompting first decompose a problem into sub-problems, then solve them sequentially. The researchers found this particularly effective for mathematical reasoning and symbolic manipulation.

Ensembling: Strength in Numbers

Don't rely on a single response. Self-Consistency runs the same prompt multiple times with different reasoning paths, then takes the majority answer. It's computationally expensive but can significantly improve accuracy on important tasks.

Self-Criticism: Built-in Quality Control

Let the AI check its own work. Techniques like Self-Refine prompt the model to provide feedback on its own output, then improve it iteratively. Chain-of-Verification generates verification questions to check for errors.

Advanced Applications: Agents and Beyond

The cutting edge involves AI systems that can use external tools, browse the internet, or write and execute code. These "agent" approaches represent the future of prompt engineering but require careful security considerations.

The Dark Side: Security and Reliability Challenges

Here's what most prompt engineering guides won't tell you: the field has serious security problems. The researchers dedicated an entire section to "prompt hacking"—techniques that can bypass AI safety measures or extract training data.

Two major threat categories emerged:

- Prompt Injection: Malicious users overriding your instructions

- Jailbreaking: Getting AI to violate its safety guidelines

Even more concerning? "No prompt-based defense is fully secure" according to their analysis of hundreds of thousands of malicious prompts. This isn't just an academic concern—it has real implications for anyone deploying AI systems in production.

The Surprising Sensitivity Problem

Perhaps the most practical finding: AI systems are incredibly sensitive to minor prompt changes. The researchers found that "extra spaces, changing capitalization, modifying delimiters, or swapping synonyms can significantly impact performance." In some cases, these tiny changes caused performance to range from nearly 0% to 80%+ accuracy.

This sensitivity explains why prompt engineering often feels more like art than science. Small, seemingly meaningless changes can have dramatic effects, making systematic optimization crucial.

Real-World Application: A Case Study in Prompt Engineering

To demonstrate these principles in action, the researchers documented a complete prompt engineering process for a challenging real-world problem: detecting signs of suicidal crisis in text. Over 47 recorded development steps and 20 hours of work, they improved performance from 0% to an F1 score of 0.53.

Their key lessons:

- Domain expertise is crucial - Understanding the problem matters more than technique knowledge

- Iteration is everything - Small, systematic changes compound into major improvements

- Automated tools can help - DSPy framework outperformed manual engineering

- Guard rails can interfere - Model safety features can block legitimate use cases

Practical Implementation: Your Action Plan

Based on this comprehensive research, here's your step-by-step approach to prompt engineering:

For Beginners: Start Here

- Begin with Few-Shot examples - Show 3-5 examples of your desired input/output

- Add Chain-of-Thought - Include "Let's think step by step" or similar reasoning prompts

- Test systematically - Small changes have big effects, so document what works

- Consider your domain - Collaborate with subject matter experts when possible

For Intermediate Users: Level Up

- Implement Self-Consistency - Run important prompts multiple times and take the majority answer

- Use decomposition - Break complex tasks into smaller, sequential steps

- Add self-criticism - Have the AI check and improve its own work

- Optimize systematically - Use tools like DSPy for automated prompt optimization

For Advanced Applications: Go Further

- Explore agent capabilities - Integrate external tools and APIs

- Implement security measures - Use detection tools and guardrails for production systems

- Consider multimodal inputs - Extend techniques to images, audio, and video

- Plan for multilingual use - Adapt techniques for non-English applications

The Future of Prompt Engineering

The researchers identified several emerging trends that will shape the field:

- Automated Optimization: Tools like DSPy and AutoPrompt are beginning to outperform manual prompt engineering. Expect this trend to accelerate.

- Multimodal Integration: Techniques are rapidly expanding beyond text to include images, audio, and video inputs.

- Security Solutions: While current defenses are imperfect, new approaches combining detection, guardrails, and dialogue management show promise.

- Standardization: This research represents the first step toward standardizing terminology and best practices across the field.

Key Takeaways for Practitioners

- Start simple, iterate systematically - Don't jump to complex techniques without mastering the basics

- Domain knowledge beats technique knowledge - Understanding your problem is more valuable than knowing every prompting method

- Small changes have big impacts - Test variations methodically and document what works

- Security isn't optional - Plan for adversarial inputs from day one

- Automation is coming - Learn to use automated optimization tools alongside manual techniques

- Collaboration is key - The best results come from combining prompt engineering expertise with domain knowledge

The field of prompt engineering is evolving rapidly, but this comprehensive research provides the foundation you need to navigate it effectively. Whether you're building AI applications, conducting research, or simply trying to get better results from ChatGPT, these evidence-based techniques will give you a significant advantage.

The AI revolution isn't just about having access to powerful models—it's about knowing how to unlock their potential. With these insights from 1,565 research papers, you're now equipped to do exactly that.