The pattern is familiar enough that it has become a standard deployment story. A team rolls out an AI assistant, tracks the output, and sees real gains: proposals that used to take most of an afternoon are drafted before lunch, research summaries arrive the same morning they're requested, first cuts on complex documents land in minutes rather than days. The tools are working. Leadership is satisfied. The AI investment is declared a success.

Then someone looks at the approval queue.

It is the same length it was six months ago. The proposal drafted three times faster is still sitting in review. The pricing decision still required four rounds of stakeholder input and eight days to close. The report that now takes an hour to produce still spends three days moving between the team that generated it and the teams that need to act on it. The coordination problem is exactly where it was before the tools arrived.

This is not a failure of the tools. The tools worked at what they were designed to do. The problem is that productivity and coordination are different problems, and solving one does not move the other.

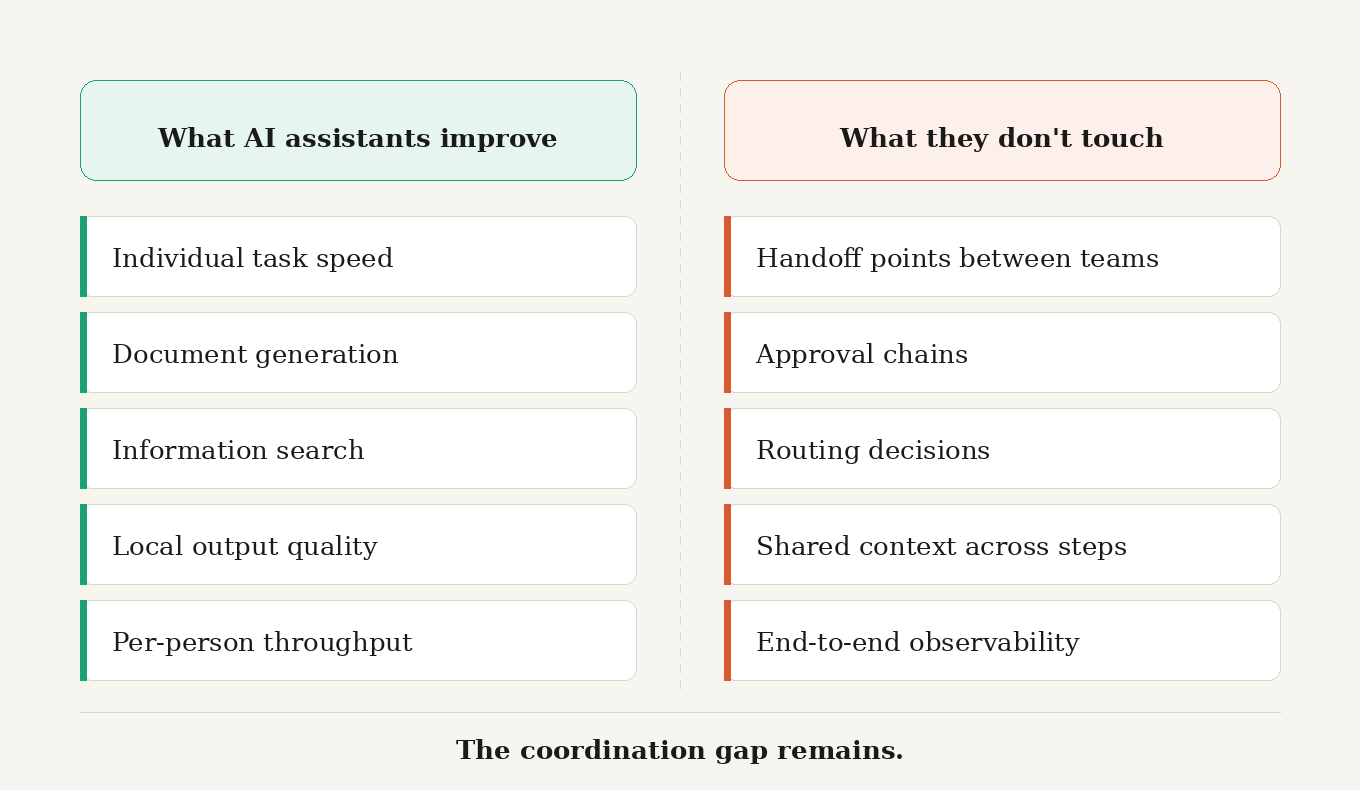

Productivity is a local measure. It tracks how quickly an individual completes a discrete task: drafting, searching, summarizing, generating. An AI assistant is designed to accelerate that cycle, and for most tasks it does. Coordination is a system-level measure. It tracks how work moves between people and systems, how tasks get routed, how decisions get reached, how context travels from one actor to the next without being dropped or reconstructed at every hand-off. These are not the same thing. They do not respond to the same interventions.

The confusion persists because the two problems are easy to conflate when per-person output metrics improve. More output produced faster looks like progress. And it is progress, at the individual level. But individual output and systemic coordination are different variables. A team can double its document output while the workflow that acts on those documents remains exactly as slow and fragile as before.

To understand why, it is worth being precise about what coordination actually requires. A coordinated system has to decompose a goal into tasks and route each one to the right actor at the right time. It has to track whether each step completed or stalled, retry the ones that failed, and escalate the ones that require a decision beyond the system's current scope. It has to maintain shared context across the full span of a workflow so that each actor knows what happened before them and what the downstream step needs. And it has to be observable end to end, so that when something goes wrong there is a clear record of what happened, in what sequence, and on what authority.

An assistant does none of that. It helps one person with one task. The handoff to the next person, the routing of the next decision, the tracking of where the overall workflow stands, those responsibilities remain with the humans in between. The human middleware stays in place.

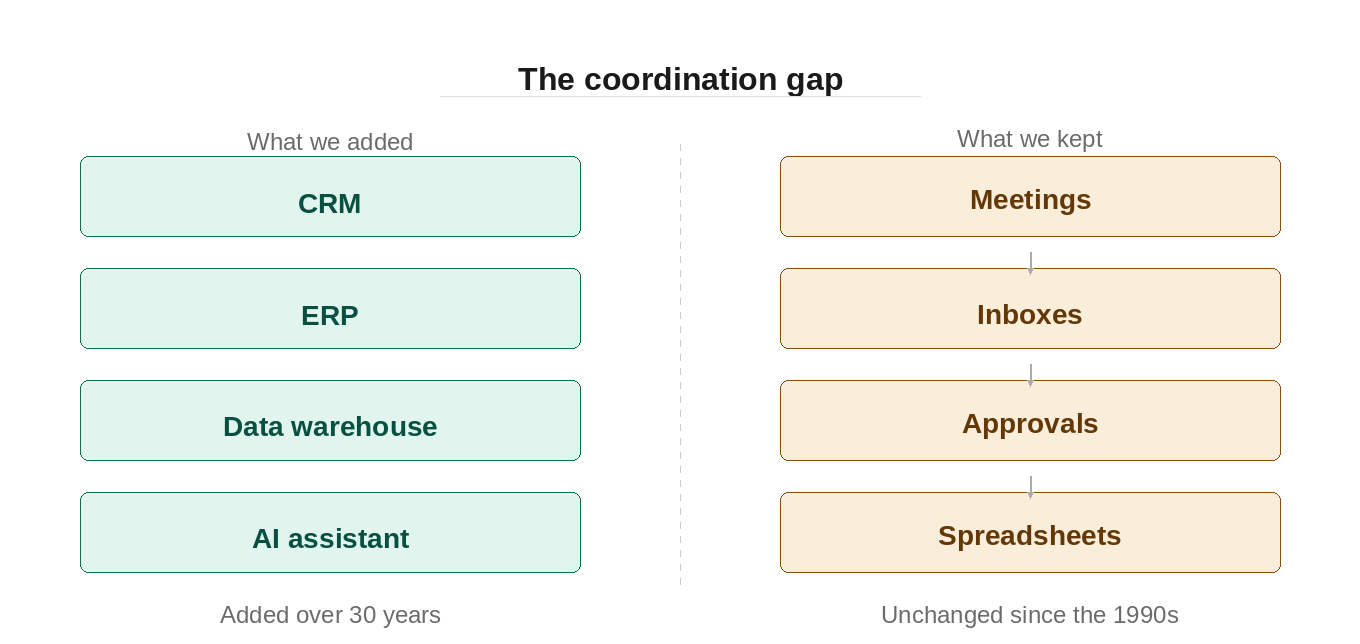

What makes the current deployment pattern worth examining closely is that isolated tools can make this structural problem harder to see, not easier to fix. Each tool carries its own context and generates its own outputs. The analyst's assistant does not share state with legal's platform. The contract drafted quickly in one system still has to be moved, contextualized, and reformatted by a person before it enters the next. The data fragmentation problem that enterprises spent years trying to partially address gets reproduced at a new layer: AI silos sitting on top of data silos, each with its own context window, none of them connected to the next step in the process.

More tools. Same translation layer. More content produced faster, delivered to the same coordination bottleneck that was there before.

The failure mode is subtle, which is what makes it durable. Individual output metrics improve. Dashboards look better. The cost, decision latency, errors at hand-off points, the overhead of reconstructing context at every transition, is structural and accumulates slowly, showing up in the aggregate rather than on the same reports that track individual productivity. Companies mistake the local productivity signal for a systemic one. The AI programme is rated a success while the coordination problem deepens quietly beneath it.

There is also a consistency dimension that tends to go unnoticed until it causes a problem. Coordination requires that the same rules apply regardless of who is handling a task on a given day. The same approval threshold on a Monday applies on a Friday. The same escalation path applies whether the person managing the work is senior or new to the role. Assistants do not hold or enforce that consistency. Each session starts without memory of the last. Each person applies their own interpretation of the process. That inconsistency is part of the coordination cost, and it remains invisible until an exception surfaces it.

None of this is an argument against AI assistants. For individual task speed, they are frequently the right investment and the gains are real. The argument is narrower: a productivity tool cannot substitute for a coordination system, and treating it as though it can leads to planning decisions that compound over time. Teams that make this mistake once tend to make it again, adding more local capability while the cross-team, cross-system coordination challenges go unaddressed.

The useful question is not how to make an individual assistant smarter. A smarter assistant still operates at the individual level. The useful question is what category of system actually addresses the coordination requirement, what it needs to provide in terms of goal decomposition, task routing, shared state, approval handling, and end-to-end observability. Those are system properties. They require a system-level answer.

What that answer looks like in architecture is where the argument goes next.

Related reading

The coordination debt that's quietly costing enterprises their edge establishes the structural framing this post builds on: that the bottleneck is coordination rather than individual capability, and that the problem is invisible on individual dashboards but visible at the system level.

Next in the series: From static workflows to agentic operating systems takes the architectural requirement introduced here and begins to describe the category of system that can actually meet it.