A company wants to change its pricing model. The data exists, the analysis is ready, and the decision is made. What follows is predictable: a pricing approval workflow routes the proposal to three managers for sequential sign-off, then to finance for impact analysis, then to IT to update systems. Each handoff is tracked. The workflow executes exactly as designed.

Then a competitor announces a price drop. The proposal is now outdated. The workflow has no mechanism to detect this, no authority to adapt, and no option except to restart from the beginning or pass the problem to a human who can override the entire sequence.

This is the structural limit of process automation. Predefined sequences execute efficiently, but they cannot respond to conditions they were not explicitly programmed to anticipate. Every exception becomes a human problem.

Contrast this with a system built around goal decomposition and dynamic routing. The same pricing change begins with a stated objective: update pricing to match new market conditions. A coordination layer decomposes this into tasks: validate pricing logic, assess financial impact, check regulatory constraints, prepare system updates, route for approval at the appropriate threshold.

Each task goes to a specialist. A financial analysis actor evaluates margin impact. A compliance actor checks regulatory thresholds. The system determines whether the change requires board sign-off or can proceed with VP approval based on the materiality of the decision, not a fixed rule about who signs what.

When the competitor price drop arrives mid-process, the system does not restart. It triggers a reassessment task. The financial analysis updates. The approval threshold re-evaluates. If the new conditions push the decision above the materiality threshold, the workflow routes to the appropriate authority. If not, it continues. The system adapts within its defined scope rather than defaulting to human intervention for every deviation.

This is something different from automation in the traditional sense: coordinated collaboration between specialised software actors, each with a single job, under shared context and explicit constraints.

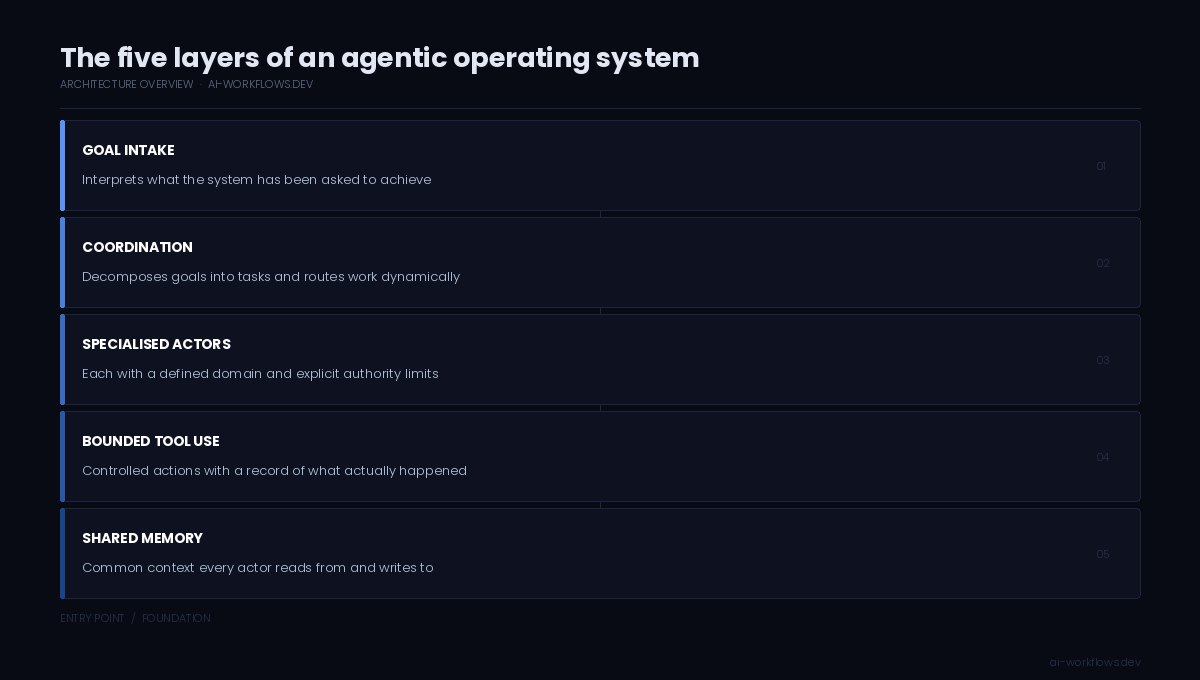

The five layers that make this possible

A system of this kind requires five components working together.

Goal intake translates business intent into structured objectives the system can act on. This is where a pricing change, a supplier onboarding request, or a compliance review begins. The goal must be specific enough to decompose but not prescriptive about how to achieve it.

Coordination decomposes goals into tasks, routes each task to the appropriate specialist, manages sequencing and parallelism, handles retries and timeouts, and inserts approval checkpoints where required. This layer holds the execution logic of the system: what needs to happen, in what order, and under what conditions.

Specialised software actors execute discrete tasks within their defined scope. A financial analysis actor assesses margin impact but does not approve the decision. A compliance actor checks regulatory constraints but does not modify pricing logic. Each actor has a single job, a clear set of permitted actions, and an explicit path for escalating what it cannot handle.

Bounded tool use mediates every external action. When an actor needs to write to a database, send a notification, or call an external service, the system checks whether the action is permitted, logs it, determines whether approval is required, and only then proceeds. This is where the boundary between recommendation and execution is enforced.

Shared memory ensures that every component operates on the same state. When the competitor pricing event arrives, it is not siloed in one actor's view. It is written to shared state that every component can read. The financial actor sees it. The coordination layer sees it. The approval logic sees it. Decisions are made on consistent information, not fragmented snapshots reconstructed from email threads.

These five components together constitute an operating system for business coordination, not a chatbot or a workflow engine, but a reusable foundation on which business capabilities run.

What this replaces

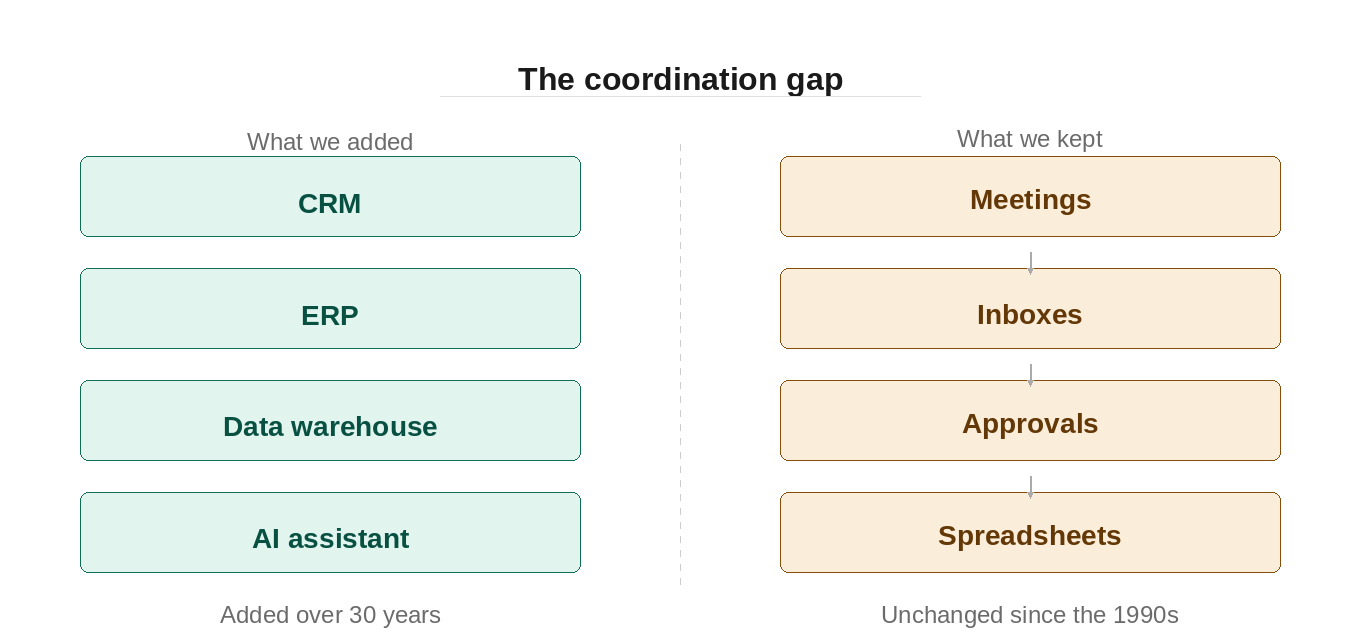

This does not replace the company. It replaces the coordination model that forces humans to act as middleware between systems.

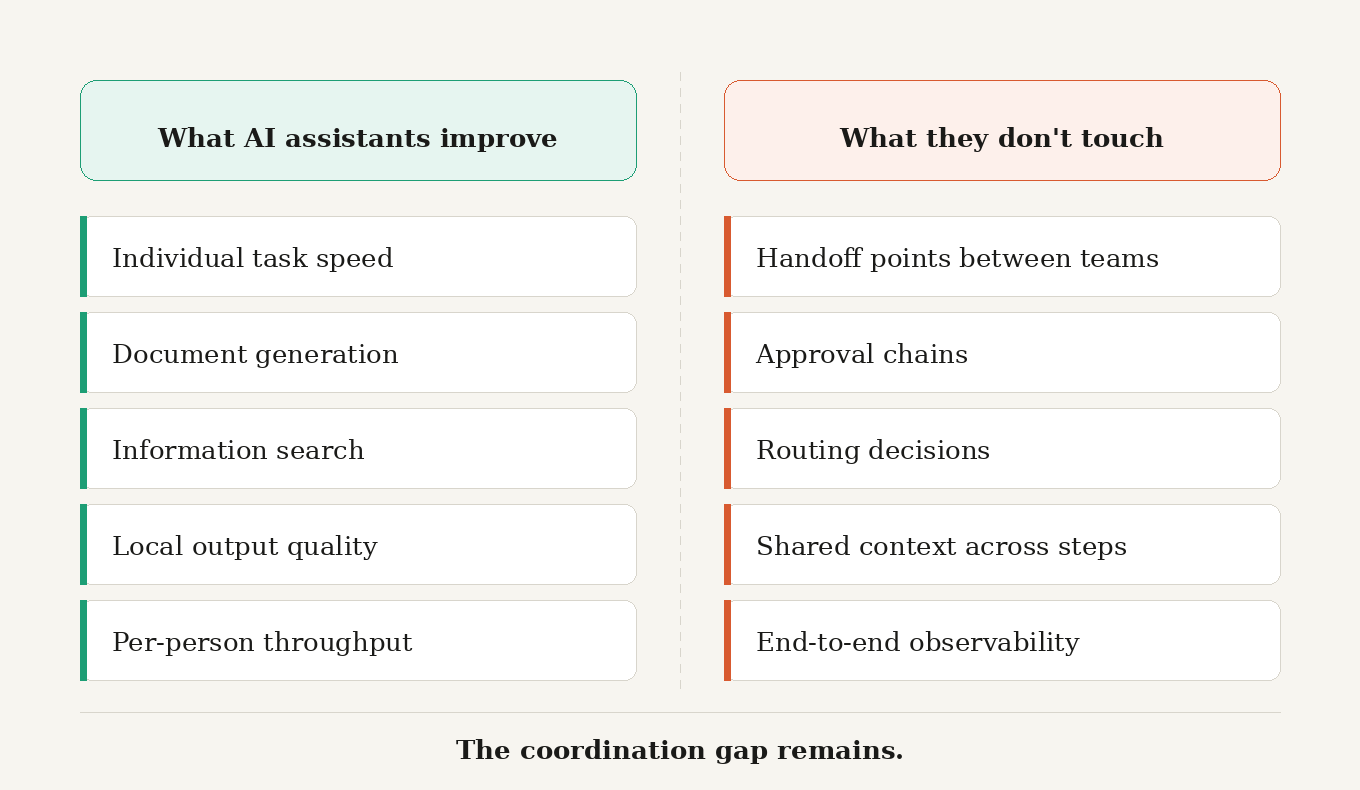

In the current model, humans route information, translate formats, reconcile state, chase approvals, and escalate exceptions because the systems cannot do this themselves. The coordination cost is invisible on individual dashboards but visible at the system level: decision latency, context lost at handoff points, and time spent reconstructing shared understanding that the software does not preserve.

A coordinated system handles the routing, translation, and state management. Humans move to where judgment genuinely matters: setting policy, managing exceptions that fall outside the system's defined scope, making decisions the system is explicitly designed not to make on its own.

The shift is from software as a passive toolset to software as an active participant in execution. The business still decides what matters. The operating system ensures it happens consistently, traceably, and within the constraints the business defines.

The substrate must be reusable

An operating system is infrastructure, not a vertical application. The coordination and observability primitives that make a pricing workflow auditable are the same primitives that make a supplier onboarding workflow auditable. The layer that determines whether a financial decision requires board approval uses the same mechanism that checks whether a supplier contract requires legal review.

If each business domain rebuilds its own coordination layer, its own controls, its own observability stack, the result is not an operating system. It is a collection of domain-specific scripts with no shared foundation. The coordination cost moves from humans to engineering teams rebuilding the same infrastructure in different shapes.

A layer governing business execution must work across domains. The business logic changes. The coordination, the controls, the audit trail, and the common context model do not. That separation is what makes the system reusable rather than bespoke.

The question for any organisation deploying software agents is not whether this model is possible. It is whether the infrastructure they are building today will become that reusable layer, or fragment into the next generation of siloed tools that require human middleware to connect.

Related reading

The coordination debt that's quietly costing enterprises their edge, establishes why coordination, not individual productivity, is the structural problem this post addresses.

Why AI assistants don't solve the coordination problem, explains why single-agent tools cannot address the multi-party coordination challenge described here.

Next in the series: The first platform built as a governed operating layer for business execution.